How Aviso's Large Quantitative Models Are Redefining Bottom-Up Forecasting

No time to lose? Summarize with AI

ChatGPT

Claude

Sales forecasting has historically come in two flavors. The first is top-down: leadership sets a number based on market size, historical trends, and intuition, then works backward to assign quotas.

The second is bottom-up: you start with individual deals, score each one on its actual probability of closing, and aggregate upward to a forecast number. Every deal is evaluated based on real evidence, including who is engaging, what is being said, how conversations are evolving, and where the deal sits structurally in the pipeline. The aggregate of these deal-level signals becomes the forecast.

This sounds straightforward in principle. The execution is where most platforms fall short. Building a reliable bottom-up forecast requires capturing the full reality of a deal, not just fragments of it. Conversation signals surface momentum early. Interaction patterns reveal how the buying group is forming. CRM structure filters noise and prevents false positives. Pipeline dynamics ensure predictions stay grounded in operational reality.

Most systems see only one of these layers. As a result, they either miss early signals or overreact to incomplete ones.

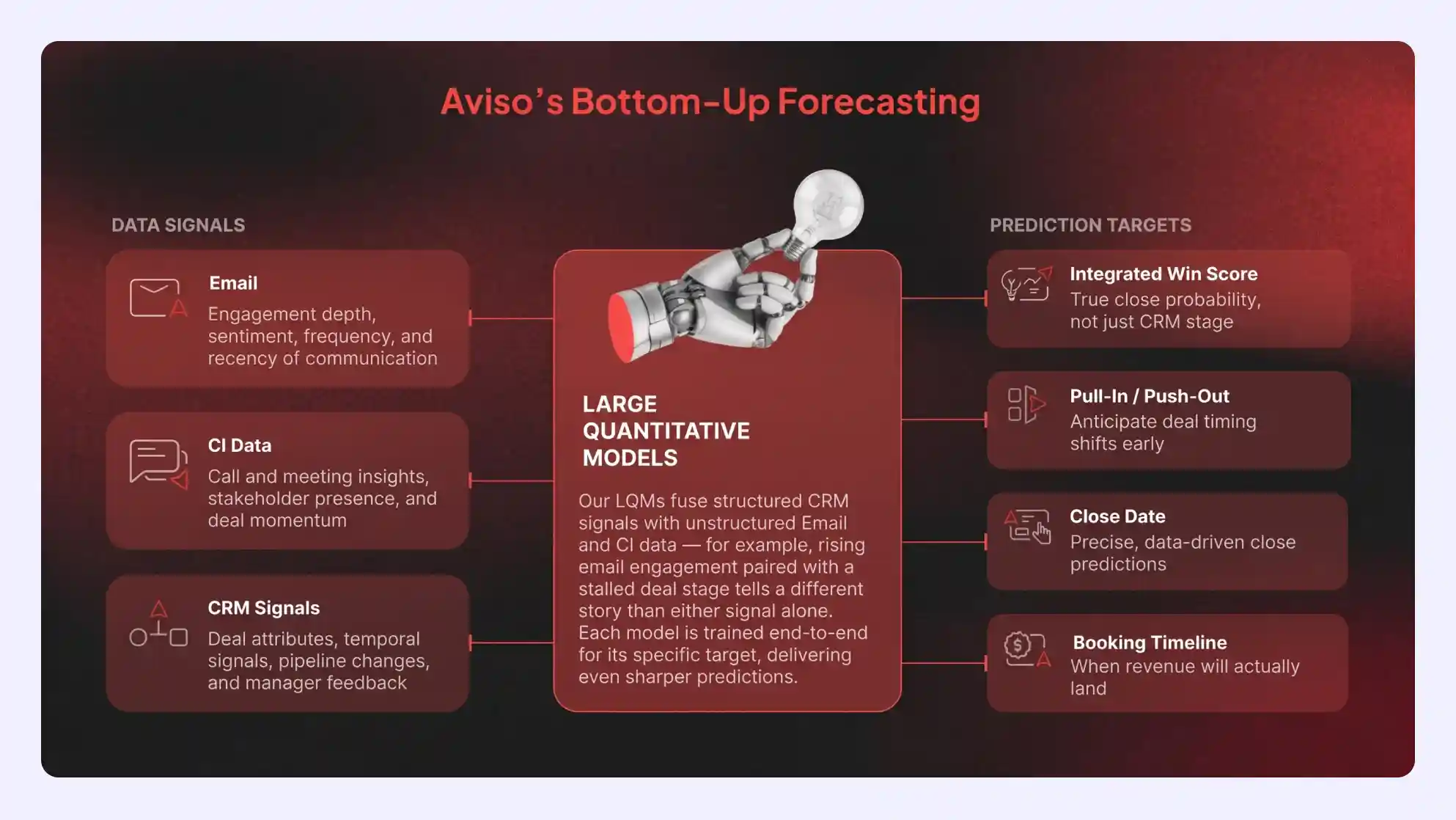

This is what Aviso was built to solve. Our approach is powered by Large Quantitative Models (LQMs), which fuse conversation data, engagement patterns, CRM structure, and pipeline dynamics into a single unified system. Instead of relying on isolated signals, LQMs bring these perspectives together to produce a more complete and continuously updated view of every deal.

Why Deal-Level Scoring Has Always Had a Data Problem

Bottom-up forecasting is more accurate but harder due to signal complexity. Deals generate constant data across CRM updates, emails, meetings, calls, and stakeholders, all of which must be interpreted in near real time.

The challenge is that the signals available to most forecasting systems are either too structured to capture what is really happening or too noisy to trust at scale. Most platforms are built around one of two approaches, and each has a critical failure mode.

CRM-only approach: Provides the structural backbone: stage, forecast, close date, pipeline position. These are useful baseline signals, but they inherently lag what is actually happening in a deal. By the time CRM fields update, the momentum shift already happened days or weeks ago.

Conversation-only approach: Relies heavily on email, call, and meeting intelligence. These signals can be rich, but they are also noisy and prone to false positives. A busy email thread may look like momentum when it’s actually a dispute or scope change.

CRM shows structure. Conversations show activity. Neither alone is reliable. Accurate forecasting requires combining both worlds.

LQMs at Aviso: The Quantitative Backbone of an Intelligent Revenue Engine

Aviso's Large Quantitative Models (LQMs) are the intelligence backbone of the platform, unifying signals across the full deal lifecycle. Instead of isolating CRM data, email activity, and conversation intelligence, LQMs integrate them into a single unified model trained end-to-end for each prediction.

At this layer, the system does more than collect inputs; it interprets relationships between them. It learns how engagement intensity interacts with deal stage progression, how stakeholder coverage influences conversion likelihood, and how shifts in activity patterns signal changes in deal trajectory. Structured data provides consistency, while unstructured data introduces nuance and context. The model continuously recalibrates these interactions across historical outcomes, allowing it to distinguish between superficial activity and signals that meaningfully change the probability of a deal closing.

Each LQM is purpose-built for a revenue outcome: win probability, forecast accuracy, deal risk, rep performance. The model learns from the complete data exhaust of a B2B sales cycle and outputs a single, continuously updated score per deal.

Instead of insights operating in isolation, LQMs connect the dots. They recognize when a high-commit deal with no recent meetings, weak engagement, and repeated stage slippage signals systemic risk. The LQM quantifies the forecast impact and recommends exactly what to do next, whether that is escalating internally or re-engaging the buyer, so every action is grounded in connected, data-driven reasoning.

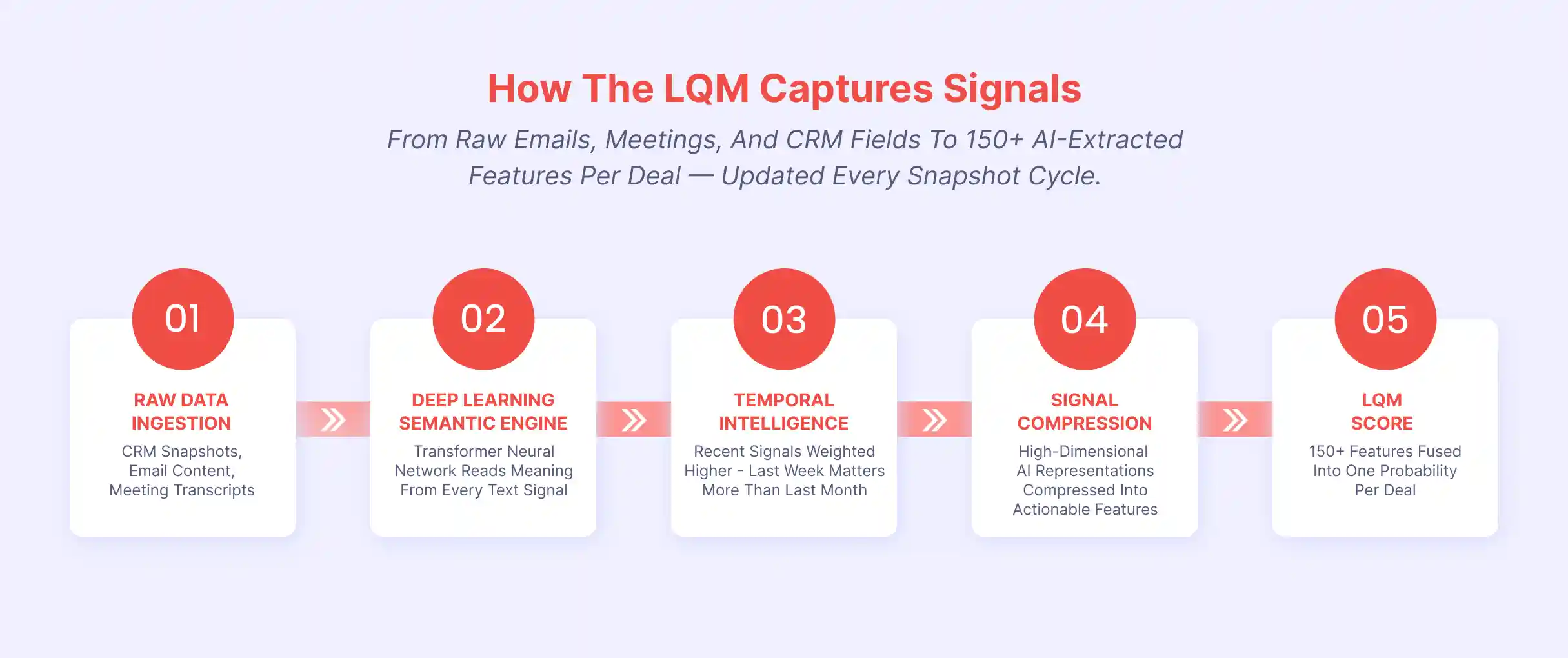

How LQMs Capture and Process Signals

Each LQM ingests raw data from three primary streams, extracts 150+ AI-derived features per deal, and assembles them through a five-stage pipeline that ends in a single win probability score, updated on every snapshot cycle. Here is how each stage works.

Raw Data Ingestion

The LQM begins by pulling in structured and unstructured data from three primary streams, refreshed on every snapshot cycle. Each stream contributes a distinct type of intelligence, and all three are required to produce a score that reflects the full reality of a deal.

Data Stream | What goes in | What the AI Extracts |

|---|---|---|

CRM | Stage, Amount, Forecast, Close Date, Owner history, field update timestamps | Structural grounding, temporal distance signals, pipeline dynamics, field recency patterns, and AI-extracted semantic features from free-text fields like NextSteps |

Email / Conversation | Every email thread — who wrote, when, to whom, what was attached, what was said | Buyer seniority mapping, engagement velocity, response patterns, CC network trends, and deep semantic understanding of email content via neural embeddings |

Meetings / Interactions | Every call and meeting — who attended, how long, what was discussed, what was the tone | Executive involvement patterns, stakeholder network depth, meeting momentum, and AI-detected language signals: urgency, escalation, commitment, objections |

Across all three streams, the LQM extracts 150+ AI-derived features per deal on each snapshot cycle. These features range from structural indicators like stage age and pipeline velocity to behavioral signals like buyer response latency, stakeholder network depth, and sentiment shifts in recent conversations. The snapshot-by-snapshot update cadence means the model is never working from stale data. Every change in deal behavior is immediately reflected in the feature set and, by extension, in the win probability score.

The Semantic Engine

At the core of the LQM is a semantic engine built on a combination of transformer neural networks. This is the same architectural family that powers large language models, but here they are purpose-trained to convert unstructured sales interactions into structured, deal-relevant signals.

Transformers handle both language and time-series data, modeling temporal dependencies, seasonality, and interactions across pipeline events for forecasting and trend detection.

Traditional sales forecasting often relies on rigid rules, such as prioritizing messages based on a sender’s job title. But deal intent lives in language. Aviso’s semantic engine goes beyond keywords to interpret signals that show whether a deal is progressing or stalling. It reads communication for meaning, processing emails, meeting transcripts, and CRM text into numerical representations of intent, sentiment, urgency, and context.

Using self-attention mechanism, the model evaluates all words in relation to each other, capturing nuance in context. Two emails from the same procurement director can signal opposite outcomes: “Budget approved. Send the final SOW.” versus “Still evaluating, circle back next quarter.” Title is one input, but language determines the signal.

Context determines the vector. Not the title.

An IC writing “security review complete, cleared to proceed” can be a stronger close signal than a VP saying “let’s reconnect next quarter.” The model learns which language patterns predict outcomes, regardless of who says them.

Each interaction is encoded into a dense vector capturing its context, then compressed into key signal dimensions and combined with structured CRM data to produce the final win score.

Temporal Intelligence

Not all signals carry equal weight. A call from yesterday is more predictive than an email from 60 days ago. A champion going silent last week matters more than an early-stage meeting. The LQM reflects this through exponential temporal weighting.

Recent signals carry more weight, while older signals decay continuously over time. This is not a cutoff. Older activity remains part of the model but does not outweigh the current state. Time-decay controls weighting, not memory. The system is recency-weighted, not recency-only.

Historical context is retained across the timeline. A competitor mentioned 60 days ago stays encoded. If a requirement appears early and is later resolved, the model links both moments and updates its interpretation. Earlier signals provide context, while newer signals determine momentum.

This design has two outcomes. The forecast reacts quickly to real changes. If a champion goes cold, the score drops in the next cycle. If a budget holder re-engages, the model updates the win probability immediately.

It also distinguishes similar deals based on timing and trajectory. A deal stalled for months is different from one stalled last week. Both may look identical in CRM, but the LQM interprets them differently because recency and progression drive outcomes.

Signal Compression

After semantic processing and temporal weighting, the LQM works with high-dimensional representations that capture rich meaning but are expensive at scale. The signal compression stage resolves this.

Embedding vectors from the semantic engine are distilled into compact feature vectors. This preserves semantic and temporal intelligence while reducing each deal to a manageable set of dimensions for efficient scoring.

Think of it this way: the transformer produces a rich, high-resolution image of what a piece of text means. Signal compression is the process of encoding the essential content of that image into a format small enough to sit alongside hundreds of other features without losing what matters.

These vectors are not human-style summaries. They are mathematical representations where each dimension encodes a learned attribute such as urgency, stakeholder depth, sentiment trajectory, or commitment intensity. These dimensions are learned during training, so the model identifies what actually predicts outcomes rather than relying on assumptions.

The result is a representation that is both compact and information-rich. These compressed vectors, combined with structured CRM features, form the input to the final LQM score.

The LQM Score

The final stage of the pipeline assembles everything into a single output: a win probability score per deal, updated on every snapshot cycle.

Here, 150+ features per deal are fused into a single estimate. These include compressed semantic vectors, temporally weighted CRM signals, engagement velocity, stakeholder depth, and behavioral patterns. The model is trained end to end on historical outcomes, so it learns which feature combinations predict results rather than relying on preset rules.

The score is not rule-based. There is no fixed formula. The LQM models nonlinear relationships across hundreds of features, including cross-signal patterns that emerge only when CRM data, email activity, and conversation intelligence are combined.

This fusion resolves a long-standing tradeoff.

CRM-only approaches typically achieve high precision, around 79 percent, but low recall of around 65 percent. So, they are right when they flag a win, but they miss nearly 3 in 10 deals that actually close. Conversation-only approaches reverse this, with recall around 76 percent but precision near 62 percent, capturing more potential wins but introducing noise that reduces trust.

The LQM delivers both. With 88 percent precision and 91 percent recall, it captures 9 out of 10 wins while reducing false signals. Fewer missed wins and fewer false commits, at the same time.

Fusion in practice: The Quiet Close

A deal marked Stage 6 with contracts in progress and a close date next week looks late-stage in CRM, but that alone does not confirm real momentum.

Conversation data shows minimal activity with only two emails in a month, which might suggest risk. But both are purchase order confirmations, and a call includes “award finalized.”

This changes the picture. The deal is quiet because the decision is already made and only final paperwork remains.

Taken separately, these signals look weak. Combined, they clearly indicate a deal nearing closure. The LQM connects these signals and correctly flags it as a high-confidence win despite low activity.

View | What You See | Interpretation |

|---|---|---|

CRM-Only | Stage 6, contracts in progress, close date next week | Looks strong structurally, but lacks proof of momentum |

Conversation-Only | Only 2 emails, but with PO confirmations and “award finalized” | Low activity suggests risk, despite strong signals |

LQM-Fusion | Late-stage deal + low but decisive signals aligned | High-confidence win, quietly closing with certainty |

Macro-Resilient by Design

Aviso’s LQMs incorporate external signals like geopolitical events, economic cycles, and shifts in buyer behavior alongside internal deal data.

Most models rely on historical CRM patterns, which reflect past conditions. When the market changes, they lag behind. LQMs adapt in real time, updating forecasts based on current conditions instead of outdated baselines.

What This Architecture Means for Revenue Leaders

For GTM leaders, the architecture produces four practical capabilities:

Forecast Confidence. Probability-weighted projections replace gut-feel calls. When the model says $6.5M of a $10M pipeline will close, that number is grounded in signals from every email thread and meeting, not a rep's optimism on a Monday morning.

Early Risk Detection. Conversation signals surface deal risk weeks before it appears in CRM. Email silence, unresponsive champions, and cancelled meetings are flagged in real time before a rep thinks to update the stage.

Deal Prioritization. Deal-level scores from the LQM tell reps which deals have real buyer engagement this week, not just which deals look good on paper.

Macro-Resilient Forecasts. The model incorporates external signals alongside pipeline data, adapting to budget freezes, geopolitical shifts, and buyer behavior changes rather than relying solely on historical CRM patterns.

Rethinking What a Forecast Actually Is

Bottom-up forecasting is only as good as the signals it can read. CRM data gives you structure but lags reality. Conversation data gives you early momentum but without grounding. Neither alone produces a number you can commit to.

Aviso's LQMs solve this by fusing all three signal worlds into a single unified model, trained end-to-end for each prediction target. The semantic engine, built on transformer neural networks with self-attention, learns what language means for deal outcomes. The quantitative engine assembles those learned representations alongside 150+ structured features per deal, updated every snapshot cycle.

The result is 88% precision and 91% recall simultaneously. Fewer missed wins. Fewer false commits. A forecast number grounded in what is actually happening across every deal in your pipeline.

This is why leading enterprises, including Druva, Nutanix, LogicMonitor, Lenovo, HPE, CDW, BMC, NetApp, and hundreds more, trust Aviso.

See how Aviso AI can transform your forecast into a number you can actually trust. Book a Demo now.