Sales reps are saddled with many challenges. They have to keep up with the ever-changing business landscape and continuously be able to create value for their clients.

One of the critical traits of a successful salesperson is the ability to predict the likelihood of an opportunity closing in the current quarter.

With emotional intelligence, sales reps can connect with their prospects, understand their pain areas, and even guess what they need and when they need it. But guesstimates can take you only so far and will result in missing your quota more often than not.

The time sales reps spend on "dead" deals, only to realize a customer cancelled or never planned to buy, is unproductive and demoralizing. The time it takes for reps to learn a customer has become non-responsive is lengthy and can be costly.

Hunches can be a valuable tool for a salesperson only if the data is accurate and thorough.

Reps need data and analytics to support their hunches and make better decisions. AI can help them provide data-driven insights for each opportunity and make more accurate predictions about closing a deal.

Data-driven forecast outperforms one based on guesswork

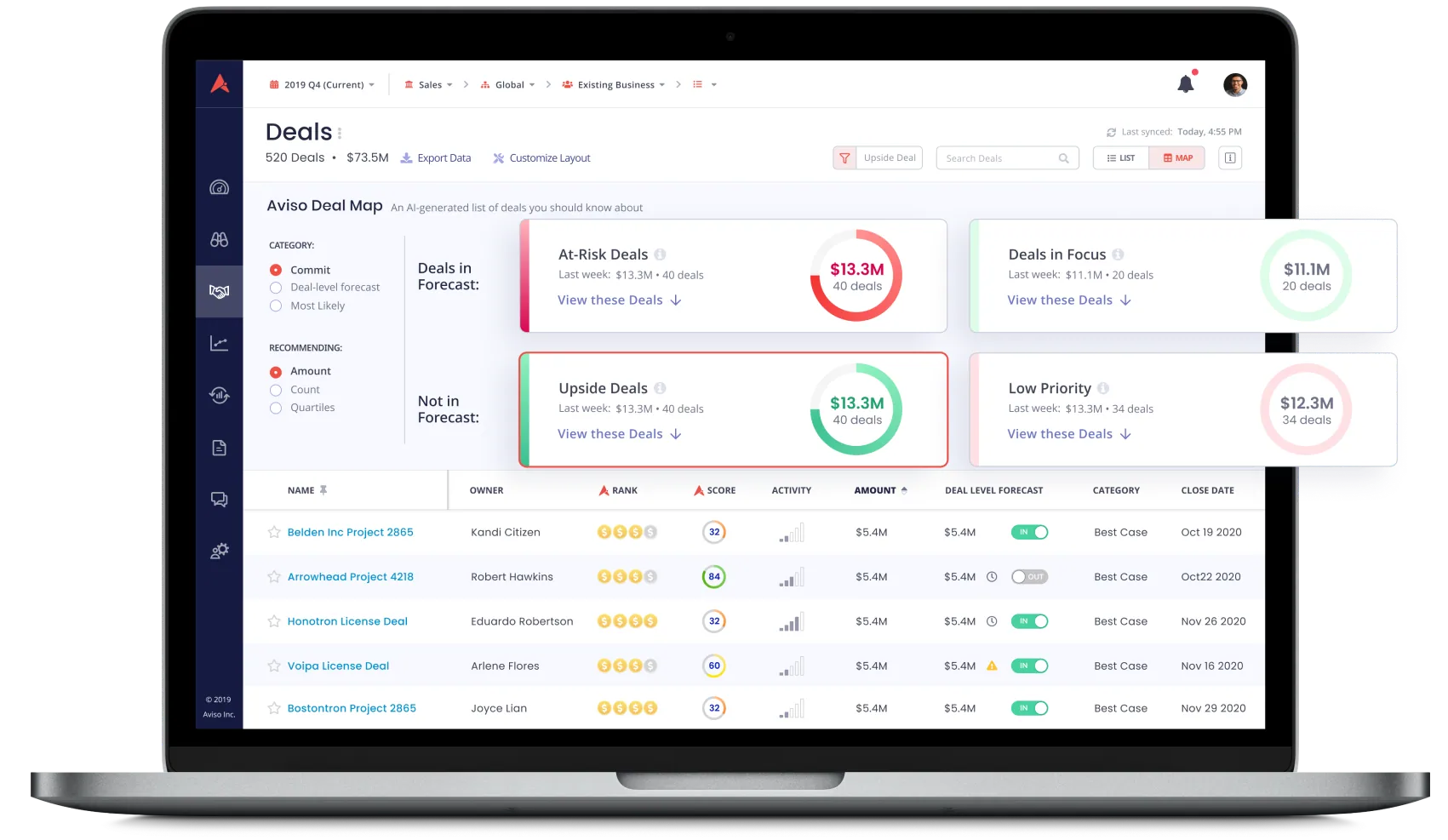

Let's look at the Aviso AI Win Scores on their predictive capabilities for Wins & No-Wins on open deals in the quarter.

Most non-winning deals have less than a 20% Win Score, whereas the winning deals have a 70-80% Win Score as early as week 4 in the quarter.

Aviso AI Win Scores play a crucial part in our AI forecasts

The bottom-up sales forecasting method evaluates the contribution of every deal towards the forecast. It starts with sales projections from reps who report on what they think can close based on the opportunities they have lined up.

Aviso’s AI Win Scores give clear visibility into how deals are progressing — all the non-winning deals will contribute relatively less to the forecast, and winning deals with higher probabilities will contribute heavily to the forecast —leading to a much more accurate forecast.

What matters most in predicting when a deal will close

We may have reps with a golden gut who can correctly predict which deals will close and which ones will not. Or we may have an overly optimistic rep who cannot hit the target and misses out on winning some of the forecasted deals. Finally, we may also have reps who, by way of prudence or sandbagging, actually end up winning those deals they thought could not be closed in the given quarter.

Let’s say a sales rep has got 10 deals in a quarter, and he predicts winning 7 of these deals whereas not winning the remaining 3 in the current quarter. The rep is faced with four possible scenarios:

- All 7 deals predicted as ‘win’ are closed-won.

- Some or all of these 7 deals predicted as ‘win’ are not closed

- All 3 deals predicted as ‘not-win’ are not closed in the current quarter.

- Some or all of the 3 deals predicted as ‘not-win’ are won in the current quarter.

Scenarios 1 and 3 are what we call the True Positives and the True Negatives, respectively. These are the correctly predicted values, with the value of the actual class being the same as the value of the predicted class. Scenarios 2 and 4 are the False Positives and False Negatives, respectively, when your actual class contradicts the predicted class.

False-positive is like a fire alarm going off when there is no fire. Think of a prospect you identified as the ideal candidate for cross-selling who didn’t respond. The most obvious way false positives hurt your business is that they lead to lost sales, threaten the brand reputation, and lose customers altogether.

On the other side, a false negative is a deal not followed-up on the assumption that it will fail and ultimately proved successful but took much longer to close as it was not nurtured properly. It could be a deal that did not seem promising and was consequently not followed.

Aviso’s AI Win Scores are more precise, and they influence the outcome positively than just relying on your gut

A commonly used method to measure the performance of a classification algorithm is a confusion matrix. A confusion matrix plots the amount of correct predictions against the amount of incorrect predictions. We looked at the confusion matrices to compare projections from humans (reps) and Aviso’s AI with the final deal Outcome:

True positives and true negatives are the correctly predicted observations and are shown in green. We want to minimize false positives and false negatives, so they are shown in red color.

Confusion matrices can be used to calculate performance metrics for our models. Of the many performance metrics used, the most common are accuracy, precision, and recall.

Accuracy- How accurate is my prediction about winning and not-winning

Accuracy is a measure of how many correct predictions your model made for the complete test dataset. We look at the ‘accuracy metric’ to analyze the number of times we were correct when predicting the chances of winning or not winning a deal.

Predictions of Aviso AI Win Score are 20% more accurate than the rep’s predictions.

Precision- Was I precise about winning this deal

Precision is a measure of the correctness of a positive prediction.

Going back to our previous example of a sales rep having 10 deals in a quarter, we have two elements for prediction- winning 7 deals (positive prediction) and not winning 3 deals (negative prediction).

Accuracy measures the number of times a model predicted the correct element- ‘win’ or ‘no-win’. A model can have 100% accuracy simply by predicting all the 3 not winning deals (and not identifying the winning deals). While this model has stunning accuracy, this is an apt example where accuracy is definitely not the right metric.

You would want to measure how many winning deals (the positive prediction) a model can predict correctly!

Our analysis shows that Aviso AI Win Score has a much higher precision rate when it comes to predicting the chances of winning a deal.

Aviso AI Win Score is 32% more Precise than those based on projections from humans (reps) in predicting the probability of winning a deal.

Remember- high precision relates to the low false-positive rate!

Recall- How do I identify which deals will close this quarter

Recall – or the true positive rate – is the measure of how many true positives get predicted out of all the positives in the dataset.

For all the deals that were ultimately won, how many did our model find out? Now that we know how many times our model predicted correctly overall and how good our model is at predicting a specific element (win/not-win), it’s time to find out how good our model is at finding deals that can be won in a given quarter - the ‘recall’ metric.

Aviso AI Win Score can help reps identify 71% of deals that they will win in the quarter just after 4 weeks from the start of the quarter.

Performance achieved by Aviso AI tenants

Aviso tenants have shown much higher deal level coverage, as early as the first month of any quarter, compared to the human judgment for the respective quarters. Of the total booked 1715 deals, AI could identify 1488 deals as closed-won, whereas humans predicted winning just 478 of these deals.

As early as week 4, AI was able to identify 87% of the total won deals which is significantly higher than the rep’s 28%.

The increased visibility into the potential closed-won pipeline early on, provided by Aviso’s AI Win Scores, helps reps allocate their efforts and resources to deals with a higher likelihood of closing in the current quarter, thereby improving their productivity. Aviso AI's Win Score also gives the company better predictability in their revenue schedules, especially in the present times when revenue leakage is a strong severing factor causing a Gray Rhino like environment for the global market.

Conclusion

Accurate deal-level forecasting is an essential task for sales reps, but it's often difficult to predict the outcome of a deal in advance without some intelligent system in place. Human judgment has its own biases and cannot truly assess the deal's potential. Sales reps can overcome this difficulty with AI-based revenue forecasting solutions. It can help analyze the deal from several different dimensions using large historical datasets and learn the patterns to produce more accurate predictions than humans could not do alone.

Aviso uses advanced algorithms to develop Aviso's AI Win Score for each deal. Aviso AI’s deal-level Win Scores uniquely triangulate the deal-winning or non-winning signals that reps can leverage to plan their quarter activities way ahead of time. Win Scores leverage ML technology to combine deal closing patterns, rep performance, and activity history with prospects. This gives reps a winning edge to guide their deal activities and resources to close the most efficient deal volume and pipeline for their quarter.

Say goodbye to hunches!

Try our AI model today to outsmart your competitors and grow your business.

BY USECASES

BY USECASES

BY CRM INTEGRATION

BY CRM INTEGRATION

COMPARE

COMPARE